Of all the possible A1 (audio first) mix positions in play across live music, event production, theater, and sports, arguably the most challenging is sports because of the need to split attention between all the field inputs and all the intercom chatter. While an A1 in a music venue only needs to focus on the band, the sports A1 is constantly being distracted by executives in the truck demanding changes to an ancillary feed while, at the same time, the video director wants an adjustment in their talent’s IFB (Interruptible Foldback). Almost all live A1s in significant venues have an A2 (audio second) copilot, but sports A1s generally do not. They fly alone. As a result, the important capture and processing of audio from the field often takes a back seat to the more immediate needs of the truck and broadcast team, meaning the broadcast mix suffers not from lack of skill but from lack of attention.

The historical challenge of live broadcast audio capture has migrated along an increasingly difficult path over time, starting with the benign envelope of mono, where everything fell into a single basket; through stereo with its available horizontal palette left to right; on to 5.1 surround with direct placement of signals into discrete trajectories front, center, sub, and surround; and finally to Dolby Atmos (typically 5.1.4), adding four overhead speakers for crowd response and ambience. The problem for most live sports A1 mixers is they must create each of these discrete mix formats simultaneously while handling other chores and monitoring the intercom chatter. The outcome is typically a compromised mix. The tools and talent are there but distraction keeps them from being fully realized.

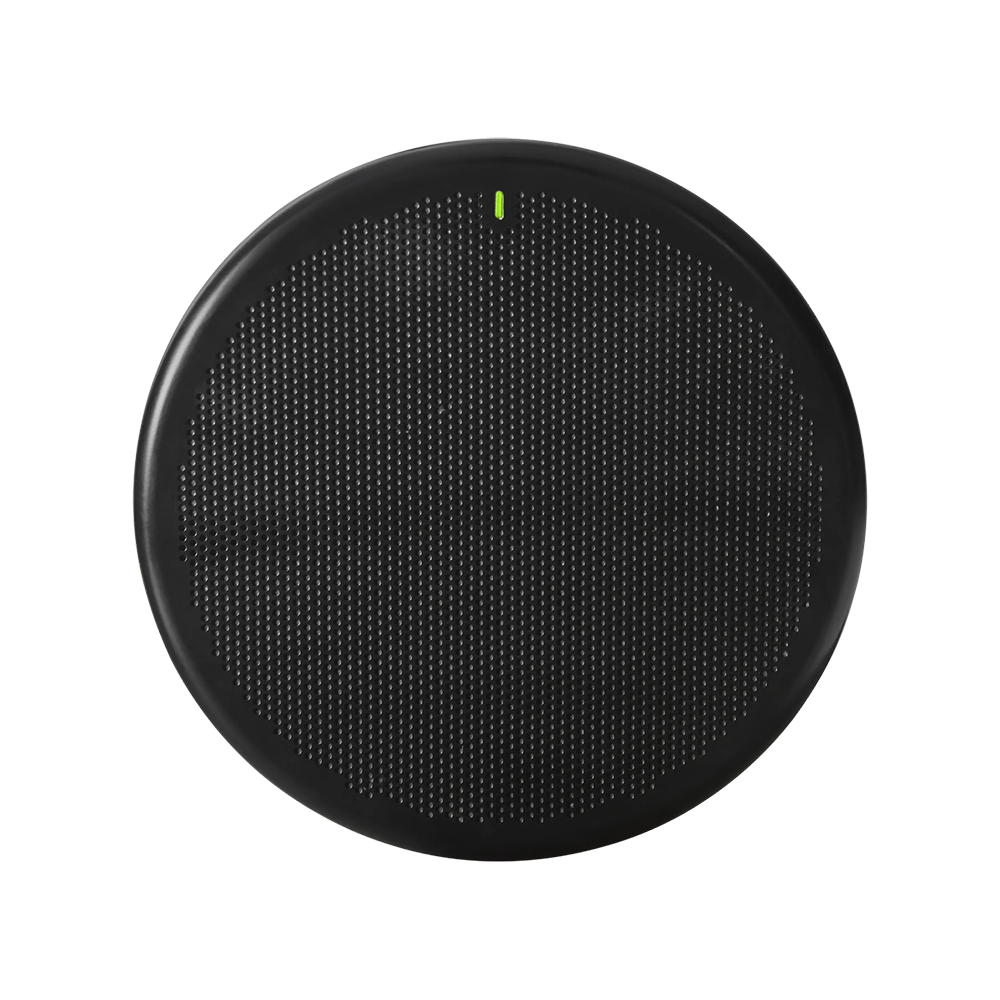

For Val Salomaki, CEO of EDGE Sound Research, the failures inherent in the current live sports mixing protocols led to underwhelming demos of his company’s groundbreaking Embodied Sound delivery technology. The hardware was there, but the mix was seldom correct. He then realized that EDGE could solve the capture and processing segments of the sonic triangle, beyond just the enhanced Embodied Sound presentation. To that end, EDGE has now partnered with Shure to create a variant of the DCA901 digital microphone array with EDGE digital signal processing. Tailored specifically for broadcast, the DCA901 captures eight isolated audio channels from a single device with steerable lobes to pick up specific areas of the field or court.

While the DCA901 itself is not unique in the industry — similar products, such as Sennheiser’s TeamConnect, are available — the differentiator for this variant of the DCA901 is the inclusion of EDGE’s Virtual Sound Engine (VSE), a deep learning package capable of dramatically improving the capture and isolation of sound as objects. The VSE streamlines production of live broadcasts through a combination of sophisticated software and effective processing tools.

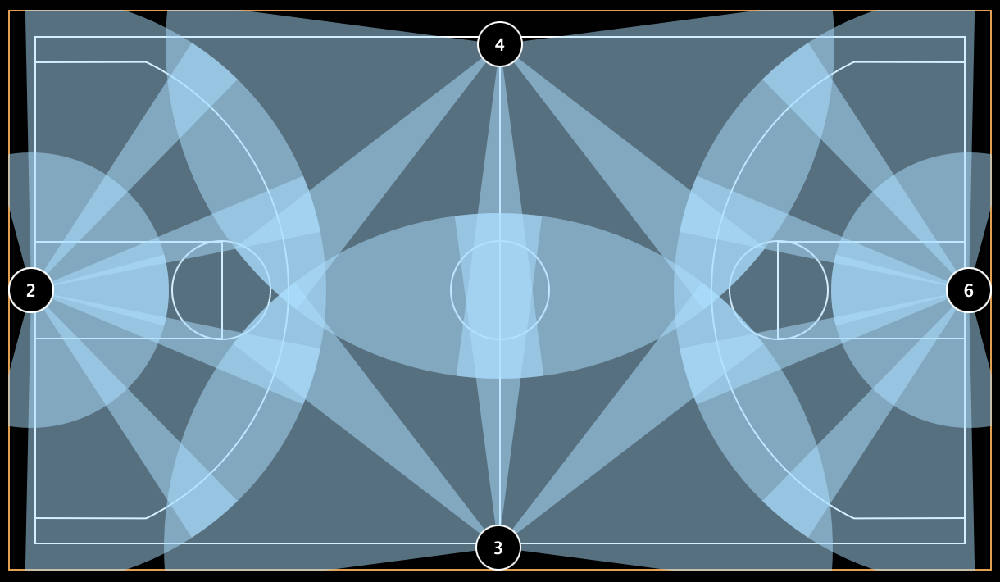

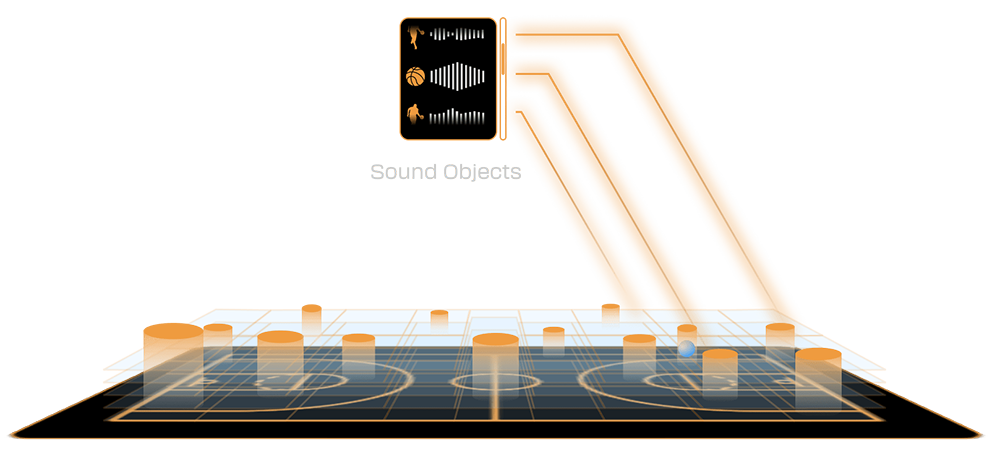

Traditional channel-based systems, such as 5.1 and 7.2, rely on manual mix control and, even with recent AI injection, do not consistently convey the breadth and depth of the sonic action on the field. Beyond the limits of channel-based mixing, VSE unlocks the potential of object-based mixing (OBM) by treating each audio source, such as a ball, a player, or a referee, as a distinct sound object, which grants unmatched precision time after time. The key to success with OBM is increased capture resolution through VSE’s ability to create high-res sound maps of the action areas. Using object tracking data generated within the map, VSE’s focus function isolates the area in real time and outputs a set of raw sound objects to be used in either automated or human-controlled mixes.

When further refinement of the signal is needed, VSE supports advanced DSP modules including equalization, compression, delay, and automatic mixing to clean up game action, isolate player vocals, or balance crowd-noise elements. On the near horizon are abilities such as creating immersive, personalized audio for interactive media and volumetric audio, where intensity is correlated to preferred position.

Shure and EDGE have cemented their relationship with a commitment to change how live broadcasts are captured, processed, and delivered, and the new DCA901 is the first fruit of their cooperation with more innovative products to come.

EDGE Sound Research teams up with Shure and Sweetwater to bring enhanced object-based mixing to sporting event audio capture and mixing for live events.

In a similar vein, Sweetwater has contractually agreed to work together as partners with EDGE, with Sweetwater being the sole retailer of Embodied Sound, both in DIY and professional integration environments. Sweetwater’s belief in EDGE goes beyond simply selling the products and ventures into co-developing product offerings in several market verticals, including high-end home theater, live sports events, and corporate.

By combining the new Shure DCA901’s broad palette of sonic energy with an incredible mix from the EDGE Virtual Sound Engine, any fan can be right in the middle of the action.